Meta가 왜 광고 AI에 1년 수조 원을 쓰나

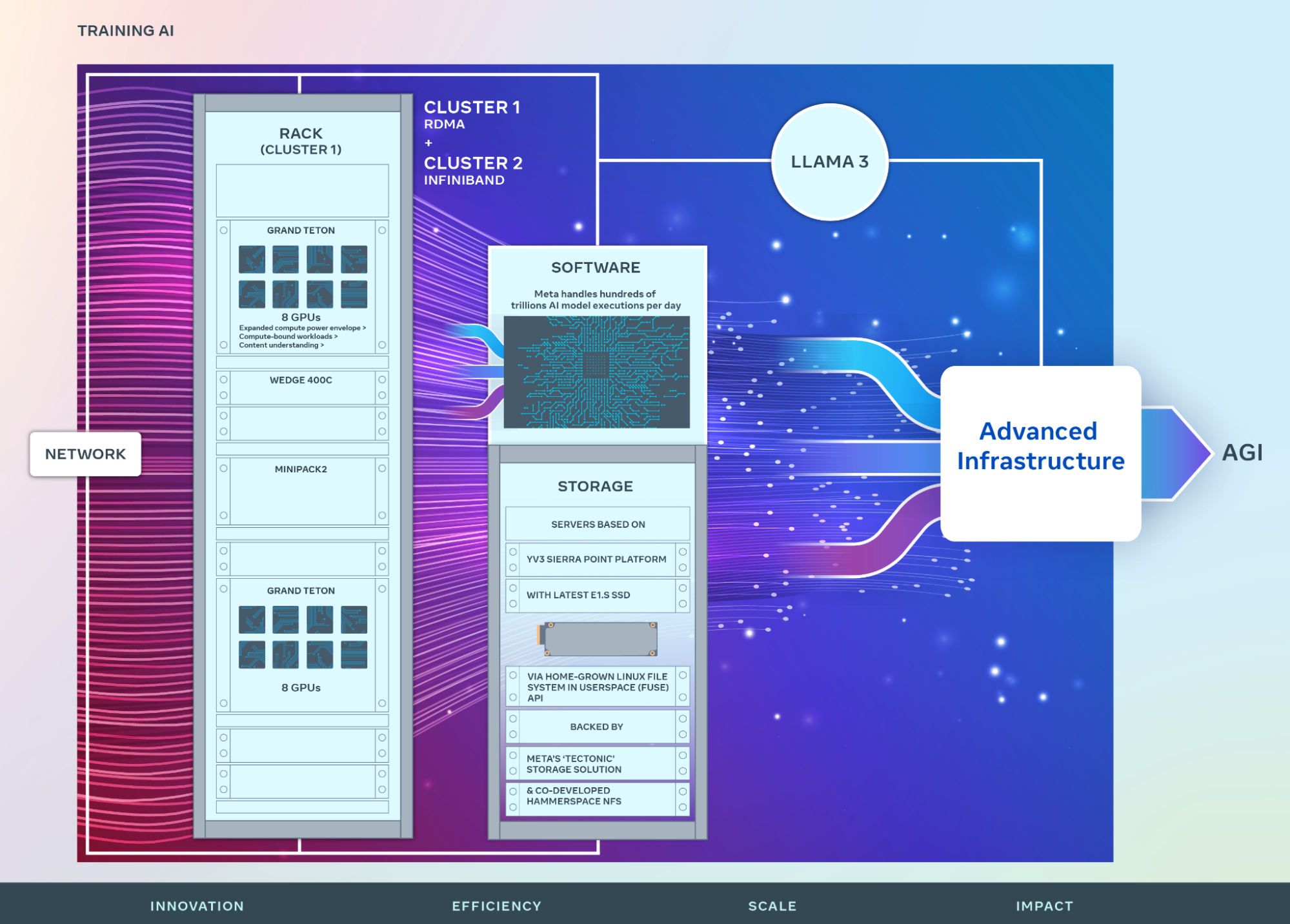

2024-03 Meta 엔지니어링 블로그. 24,000개 GPU 클러스터 2개를 구축해 GenAI·Recommendation 모델 훈련에 투입. Llama 3·Andromeda·GEM 같은 후속 발표의 하드웨어 기반.

광고주에게는 "왜 Meta가 Advantage+·GEM을 계속 밀고 있는가"의 배경.

출처: Meta Engineering — Building Meta's GenAI Infrastructure

24K GPU 클러스터가 뭐냐

Meta가 공개한 수치:

- GPU 개수: 24,576 개 × 2 (NVIDIA H100)

- 네트워크: RoCE(이더넷) + InfiniBand 두 버전 병행

- 용도: Llama 3 훈련 + Recommendation·Ranking 모델 훈련

GPU 24K 대는 무지막지한 규모. 단일 거대 AI 모델을 훈련시키려면 이 정도가 기본. 이 자원을 광고 랭킹 모델(GEM 등) 훈련에 투입한다는 선언.

광고주 입장에서 뭐가 의미 있나

1. "Advantage+가 왜 작년보다 좋아지지?"의 배경

광고주가 직접 설정한 게 없는데 Advantage+ 성과가 올라가는 이유. GEM·Andromeda 같은 중앙 모델이 계속 훈련되면서 하위 광고 상품 전체가 자동 개선. 24K GPU가 이걸 가능하게 한다.

2. AI 소재 생성의 대중화 가능

Llama 3·향후 Llama 시리즈의 오픈소스 출시 → Advantage+ Creative 같은 AI 소재 기능이 더 똑똑해짐. 광고주가 적은 수동 작업으로 다양한 소재 생성 가능.

3. 알고리즘 변동 속도 가속

GPU 자원이 늘면 모델 훈련 반복 주기가 짧아진다. Meta가 알고리즘을 월 단위로 조정하던 것이 주 단위로, 앞으로 자율 에이전트 기반 자동화까지 맞물리면 일 단위 변동까지 갈 것.

광고주는 이 속도에 적응하는 운영 리듬 필요.

Meta의 인프라 투자 규모 (참고)

- 2024년 자본지출 (CapEx): $35~40B

- 이 중 상당 부분이 GPU·데이터센터

- 업계 2위(Google 다음) 규모

Meta가 이 수준으로 AI 인프라에 투자하는 이유: 광고 매출이 전체 매출의 98%. 광고 품질이 곧 회사 생존. AI로 광고 정확도 올리는 게 가장 확실한 투자.

그래서 우리는?

직접 조작 포인트 없음. 다만 이 흐름의 수혜자가 되려면:

- Advantage+ 비중 늘리기 — 중앙 모델 혜택을 직접 받는 경로

- 이벤트 품질 유지 — 좋은 데이터가 좋은 모델을 만든다 (Garbage In Out)

- 소재 공급 시스템 — AI가 빨리 학습할 수 있도록 다양한 신호 공급

피해야 할 감정적 반응:

- "AI가 너무 강해져서 광고 비용이 오를 것" — 오히려 관련도 향상으로 CPA 내려감

- "AI가 내 일을 대체할 것" — 전략·검증 영역은 사람. 실행·분석 영역만 자동화

- "알고리즘이 너무 자주 바뀌어서 혼란" — 주간 평균으로 판단하면 해결

향후 2~3년 예상

Meta가 GPU를 더 늘릴 것. 공식 로드맵:

- 2024 말: 350,000 GPU 규모

- 2025~26: 100만 GPU 급 (업계 리더 중 하나)

이 규모에서 나오는 AI 모델의 질이 어디까지 갈지. 광고주가 체감하는 CPA 개선, 소재 자동화, 운영 자동화 모두 계속 확대 방향.

Advantage+ 활용·지표 해석·AI 시대 운영은 메타 광고 4권에서 다룬다.